R For SEO Part 6: Using APIs In R

Wow, we’re at part 6 of my R for SEO series. Welcome back. I really hope you’re finding this useful, and by now have started to use R in your work. Today we’re going to look at one of my favourite topics: using APIs in R.

There are, obviously, millions of APIs available, so today I will just look at a couple of my favourite SEO-specific ones which will give you a basis for using the different types. We’re going to cover SERPAPI, SEMRush and SE Ranking, how to get data out of them, how to authenticate with them and how to work with the data they give you.

Now that’s out the way, let’s get started.

What Is An API?

An API – or Application Programming Interface – is a way of getting data out of, or pushing data into, another program or application from your own program or application.

I promise you, this is nowhere near as complicated as it sounds. In fact, we’ve already used two APIs fairly extensively in this series – the Google Analytics and Search Console APIs. These came from pre-built packages, but using your own API of choice isn’t that difficult either.

Let’s take a look at how to do this.

Read The Documentation

Every API does, or should, come with extensive documentation which tells you how to create your queries and run your API calls. Sadly, since R isn’t as popular as Python in the SEO space, there is a lack of R-specific documentation for most APIs, but once you have the basics of creating your calls down, you’ll be able to work most of them out.

The Anatomy Of HTTP API Requests

In R, and most other languages, the majority of APIs are called via http requests – essentially creating a URL and downloading the content of that URL into a data frame. The outputs come in a variety of formats, but JSON is the most common, and also my preferred output.

In their most common form, a http API request, or API call, has the following core elements:

- The endpoint: The core URL that the API is hosted on

- The authentication: Usually a text string called the API key. Some platforms such as the SE Ranking API do this differently and I’ll cover that later in this article

- The query: The parameters in the URL that tell the API what data we want out of it

There are obviously different elements in every API, but I’ve found that if you think of the call in that format, it helps. Again, read the documentation.

Now we’ve got that down, let’s think about how JSON works in R.

Working With JSON In R

JSON, or JavaScript Object Notation, is a standard way for data to be exported from APIs and many other systems. It’s great, but it’s not always “tidy” when it comes to working with it in R. Due to the amount of data available and how R handles nesting of data, it can be a little challenging.

Fortunately, there are packages and conventions within R to make working with this data an easier experience.

The jsonlite Package

There are lots of JSON-related packages available on CRAN – over 60, last time I checked – but jsonlite has always served me well, so it’s the one I’m using for this series. As you go further into your R journey, you may well find a package that you prefer, and that’s fine, but I hope this gives you a grounding in using JSON APIs in R.

You can install the jsonlite package with the usual commands:

install.packages("jsonlite")

library(jsonlite)

And you can read the jsonlite documentation here.

Now that’s installed, let’s put together our first API call using SERPAPI.

Using SERPAPI In R

There are numerous search engine scraping APIs available, but SERPAPI is my favourite. It offers a fantastic amount of data from almost any geographical area for a very low cost and it works brilliantly in R. I’m a fan.

I’ve built a number of tools with SERPAPI over the last couple of years, but here’s a really simple one for our first call.

Let’s see what the SERP looks like for the term “TV Units” in London.

Building Our First SERPAPI Call In R

As I mentioned earlier, there are components to every API call, one of which being the authentication, or the API key. Fortunately, SERPAPI is very easy to sign up for and even offers a free plan of 100 calls a month, which will be more than enough for all the tutorials in this series, but if you do feel like you’ll need more calls, you won’t find many better platforms for the price.

You will need API keys to go through these tutorials as I can’t share mine, but the key focus for later pieces will be ones you can use for free, like SERPAPI. Firstly, go to this link and sign up for free.

Now you’ve signed up, let’s create an object using your API key. This is easy and can be done like so:

apiKey <- "XXXXXXXXXXXX"

Replace the X’s with your SERPAPI key, but remember to keep the speech marks.

Now we need to create variables for the rest of the API call.

Creating A SERPAPI Endpoint Object In R

We’re going to use SERPAPI as an example here, but to use most APIs in R, you’re going to need to create an endpoint object. Here’s how to do it.

serpApiEndpoint <- "https://serpapi.com/search.json?engine=google"

This a simple object that just brings the SERPAPI endpoint into our R environment. You can recreate this with most APIs that use http requests, just change the endpoint URL accordingly.

Now we have our endpoint and API key variables created, we need to put our actual query together.

Creating Our SERPAPI Query In R

We now have our endpoint and API key variables created, so now we have to create our actual query. This is the third part of an API call and this is where that documentation becomes really important. What we’re going to do here is tell SERPAPI what we want from its API.

Now we’ve got our endpoint and API key variables, let’s create another variable for our query, remembering that our goal is to see the SERP for “TV units” in the UK. Here’s how to do that.

serpApiCall <- paste(serpApiEndpoint, "&q=tv%20units", "&location=United+Kingdom&google_domain=google.co.uk", "&api_key=", serpApiKey, sep = "")

Let’s break that query parameter down.

- paste(: We’re using the paste function from base R to create a string of our various parameters

- serpApiEndpoint, “&q=tv%20units”: We’re calling the API endpoint we created earlier, invoking the Google search engine

- “&q=tv%20units”: The “&q=” parameter is adding the query to our URL – API calls are generally constructed using & to define different parameters. In this case our query is “tv units”. You’ll notice that we’ve used %20 in the query here – this is the URL encoding for a space

- &location=United+Kingdom&google_domain=google.co.uk: We’re looking at the UK version of Google

- “&api_key=”, serpApiKey: This is the parameter for the API key and in this case, rather than put the whole thing into our call, we’re using our serpApiKey object

- sep = “”): As with previous pieces, the sep parameter tells R what separator we want to use in our final pasted output. As we don’t want there to be a separator, the speech marks are empty. Don’t forget your closing bracket!

Now we’ve created our full API Query, let’s run it in our R console.

Running A SERPAPI Query In R

Now we’ve built our first SERPAPI http request, we need to call it into our R environment using the jsonlite package.

Here’s how to do that:

serpAPI1 <- fromJSON(serpApiCall, simplifyDataFrame = TRUE)

This will take a couple of seconds to run, but once it does, you’ll have the entire SERP for the keyword “TV units” based in London in your R environment.

When you run this command, you’ll see the following in your RStudio environment explorer.

Now we have our SERPAPI data in JSON format in our R environment. Let’s see how to explore that.

Examining JSON Data In R

As you’ll have seen from the above, there’s not really that much involved in getting JSON data from an API, but it’s all nested, which can make the initial exploration a little challenging as it’s a series of lists. Fortunately, the SERPAPI output is cleanly formatted and denoted, so it’s not too difficult, and it’s way better than having it in separate CSV files, the way some APIs do.

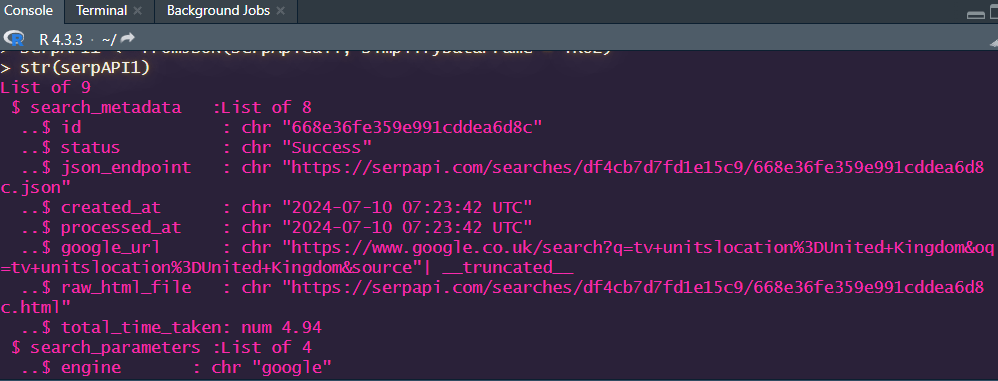

If we start by using the str() command, we’ll see the following output:

str(serpApi1)

As you can see, what we have here is a series of lists with data frames nested within them. It looks a little ugly at first, but it’s actually simple once you get to grips with it.

Essentially, we need to think of each header we found in our str command as a different data frame, so we’ll be using the $ parameter in our exploration more than once.

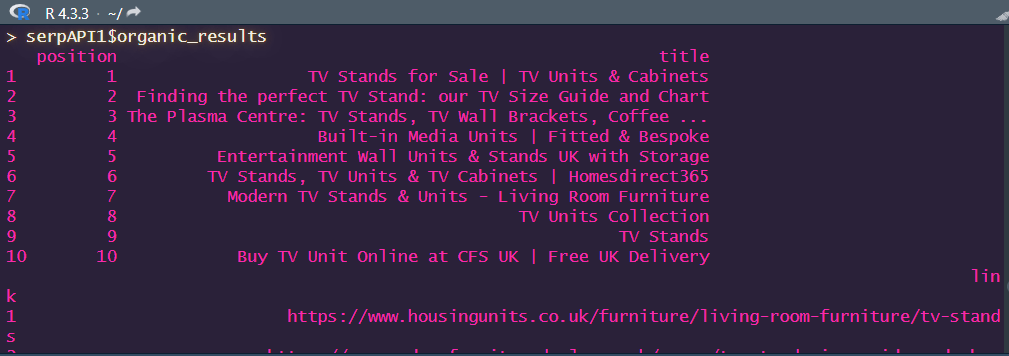

Let’s take a look at the organic results from our SERPAPI query:

serpAPI1$organic_results

This will give you the following output:

We can do the same with every frame within our export. At the time of writing, we can see that ikea.com had the number 10 position in London for the TV units term. We can add extra parameters to explore each column within the dataframes in the list, for example:

serpAPI1$organic_results$title

And that’s how we explore JSON data in R.

Now let’s scale our API collection.

Running Multiple JSON API Calls In R

I’ll be honest here – I’ve run tons of JSON APIs with R over the years, and I have found very few that work the same way. It very much depends on the API and the output, so always budget some time and API queries for trial and error, especially if your API charges by the request.

Now we know how to run singular SERPAPI queries in R, but we’ve just got the raw JSON data, which means we’ve got to do more work to get the information we want into a data frame. Why don’t we create a function that can dynamically create our API calls and extract the information we want into a data frame all in one go?

Here’s how.

Multiple SERPAPI Queries In R

Firstly, you’ll want a data frame with a few keywords that you’d like to check. You can either put them into a CSV file and read them in using the read.csv command, or you can create it directly in R like so:

keywords <- data.frame(keyword = c("sliding wardrobes", "bookcases", "tv units", "dining tables"))

Alright, so we’ve got out data frame (call it “keywords” to follow along). Now we need to create a function to dynamically build our API calls and to pull the ranking URLs, domains, shown title and description and the position it’s in.

Firstly, we’ll want to re-visit our domainNames function from Part 4, which will strip a URL to a domain name. The code is below:

domainNames <- function(x){

strsplit(gsub("http://|https://|www\\.", "", x), "/")[[c(1, 1)]]

}

Now let’s look at our function.

A SERPAPI Function In R

This is a fairly simple R function, and if you’ve been following the series along, there shouldn’t be anything surprising.

serpAPIFun <- function(x){

require(tidyverse)

require(jsonlite)

apiCall <- paste(serpApiEndpoint, "&q=", x, "&location=United+Kingdom&google_domain=google.co.uk",

"&api_key=", serpApiKey, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

serpData <- fromJSON(apiCall, simplifyDataFrame = TRUE)

serpData$organic_results$domain <- domainNames(serpData$organic_results$link)

output <- data.frame(serpData$search_parameters$q, serpData$organic_results$link,

serpData$organic_results$title, serpData$organic_results$snippet,

serpData$organic_results$domain, serpData$organic_results$position)

colnames(output) <- c("Keyword", "URL", "Title", "Description", "Domain", "Position")

return(output)

}

As always, let’s break it down:

How The Function Works

This looks a lot more complicated than it actually is.

- serpAPIFun <- function(x){: Our function is called serpAPIFun and uses an x variable. You can, obviously, call it whatever you like. I’ve simplified it for this piece and just used one variable, but you can add others

- require(): We’re adding in the packages that we need for this function. In this case, we need the tidyverse and jsonlite

- apiCall <- paste(serpApiEndpoint, “&q=”, x, &location=United+Kingdom&google_domain=google.co.uk”, “&api_key=”, serpApiKey, sep = “”): As in the single example above, we’re pasting our API URL together, with x being our keyword from our dataframe and using the UK region. If you wanted to use different regions, this could be a y variable

- apiCall <- gsub(” “, “%20”, apiCall): As before, we’re using gsub to replace spaces with %20 so it doesn’t break our API call

- serpData <- fromJSON(apiCall, simplifyDataFrame = TRUE): Now our API call is completed, we can use jsonlite’s fromJSON function to pull the data into our serpData object

- serpData$organic_results$domain <- domainNames(serpData$organic_results$link): This will run our domainNames function on the URL and create a new column of the domain name

- output <- data.frame(: We’re creating our output dataframe, with the columns chosen above (keyword, URL, title, description, domain, position), but you can use whichever you would like from the dataset

- colnames(output) <- c(: This uses the colnames command to rewrite the column headers in our output dataframe

- return(output): The finale of our function is to return our completed data frame

Now to run it, we need to use the following command, which will run through every API call:

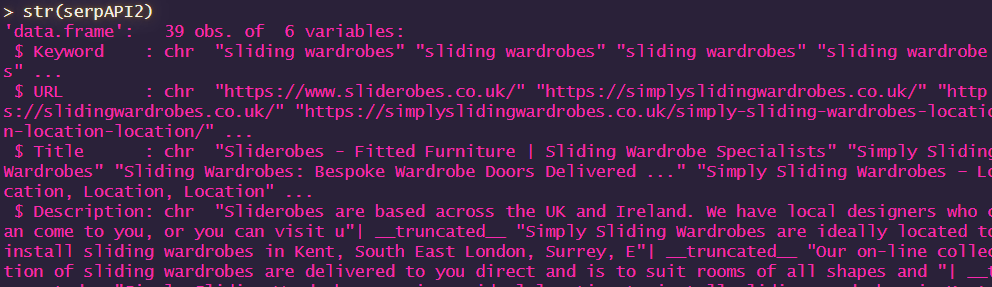

serpAPI2 <- reduce(lapply(keywords$keyword, serpAPIFun), bind_rows)

And it will return the following:

Great, right? I’ll be running through the different kinds of loops and apply commands in R in the next couple of articles, but for now, that’s how you can run multiple SERPAPI queries in R.

Now let’s look at the SE Ranking API.

Using The SE Ranking API In R

SE Ranking is one of my favourite SEO tools – so much so, I chose to bring it to my team at Harvest Digital and I am also an affiliate. It’s a truly fantastic platform that offers everything a number of other tools do at a cheaper price, and you can’t fault the speed, functionality and support that the team give. Check it out from my link and tell them I sent you!

Now when it comes to the API, it’s a very full featured one, but it can be a little tricky to get some of your IDs working. So first, we want to find out all the available databases.

Let’s take a look at how we can construct a function in R to gather all our search engine IDs from the SE Ranking API and, as always, we’ll break down how it works.

Finding Search Engine IDs From The SE Ranking API In R

We’ll start with a really simple function to get all the available search engine IDs from the SE Ranking API into our R environment.

First, we want to create an object of our API key. We’ll call this seRankingAPI. Obviously you’ll need your own API key for this.

seRankingAPI <- "XXXXXXXX"

Replace the X’s with your own API key.

Now let’s put our function together.

seRankingDBs <- function(x){

apiCall <- paste("https://api4.seranking.com/system/search-engines?token=", x,

sep = "")

databases <- fromJSON(apiCall, simplifyDataFrame = TRUE)

output <- data.frame(databases)

}

Let’s see how it works.

How The SE Ranking Database Function Works

Here’s that phrase again: let’s break it down.

- seRankingDBs <- function(x){: We’re creating a function called seRankingDBs, with a single x variable

- apiCall <- paste(“https://api4.seranking.com/system/search-engines: As before, we’re constructing our API call using the paste command. You can see that we’ve used the api4.seranking.com domain and the /system/search-engines endpoint

- ?token=”, x, sep = “”): Here, we’re adding our API key as a query string. In this case, the API key query is called “token” and we’re using the seRankingAPI object that we just created. Again, using the sep = “” parameter means there is nothing to separate the objects that we’re pasting together.That’s our URL constructed

- databases <- fromJSON(apiCall, simplifyDataFrame = TRUE): As with SERPAPI, we’re using jsonlite’s fromJSON command to download the JSON data into an object called databases

- output <- data.frame(databases)}: Finally, we’re creating our output – a dataframe of the databases in this case

Told you it was simple.

To run it, simply type:

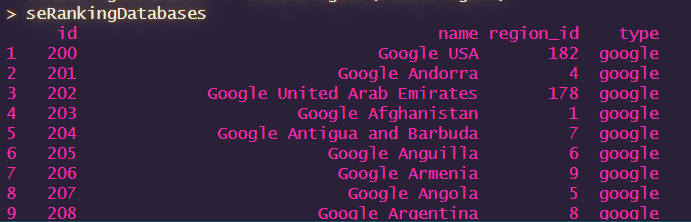

seRankingDatabases <- seRankingDBs(seRankingAPI)

And that will give you the following output:

Now let’s create another function to get search volume on our keywords from SE Ranking in R.

Search Volume From The SE Ranking API In R

Before we get going, we’ll want to know our search engine region ID. Fortunately, we’ve already got all our databases into our R environment, so it’s not difficult to find the ID we want.

Let’s say we want to look at Google UK, the ID is 180 from our list.

Now let’s create our function.

seRankingKeywords <- function(x, y, z){

apiCall <- paste("https://api4.seranking.com/system/volume?region_id=", y,

"&keyword=", x, "&token=", z, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

seRankingData <- fromJSON(apiCall, simplifyDataFrame = TRUE)

seRankingData <- data.frame(x, seRankingData)

colnames(seRankingData) <- c("Keyword", "Search Volume")

output <- seRankingData

}

I’ve gone a little backwards here on the placement of the y and x variables, but that’ll make sense in a second.

How The SE Ranking Keyword Volume Function Works

As always, let’s break it down.

- seRankingKeywords <- function(x, y, z){: Our function is called seRankingKeywords and we’re using x, y and z variables

- apiCall <- paste(“https://api4.seranking.com/system/volume?region_id=”, y, “&keyword=”, x, “&token=”, z, sep = “”): As previously, we’re constructing our URL. Here, we’re using y to be our region ID, x for our keywords and z for our API key

- apiCall <- gsub(” “, “%20”, apiCall): We’re again using the gsub command to replace spaces with the URL encoding of space – %20

- seRankingData <- fromJSON(apiCall, simplifyDataFrame = TRUE): As before, we’re using jsonlite’s fromJSON command to download our data

- seRankingData <- data.frame(x, seRankingData): We’re turning that JSON data into a dataframe with the columns x (our keyword) and the search volume output from the API

- colnames(seRankingData) <- c(“Keyword”, “Search Volume”): We’re naming our columns “Keyword” and “Search Volume”

- output <- seRankingData}: Finally, we want to return our full dataframe

Again, it’s nice and simple.

To run it, let’s use our keywords dataframe from earlier.

seRankingRun <- reduce(lapply(keywords$keyword, seRankingKeywords, "180", seRankingAPI),

bind_rows)

Here, we’re using our keywords dataframe as the keywords (x), our function name (seRankingKeywords), our Google UK region ID (“180”) and our seRanking API. Nice and simple. This command will loop through all our keywords and merge the data into a single dataframe.

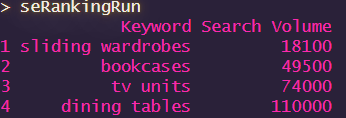

If you run it in your console and then type “seRankingRun”, you’ll see the following:

And there we go. That’s how you can use the SE Ranking API in R. There’s a lot that you can do with this API and I may well go into further depth on it in a later post when I’ve finished this series as it’s very impressive.

Now let’s look at arguably the most popular SEO tool on the market: SEMRush.

The SEMRush API In R

The SEMRush API is another great tool and is essential for a lot of SEO work, and I use it a lot with R. Disclaimer again – I’ve been a customer of SEMRush for more years than I care to remember, and I am also an affiliate, because it’s so awesome.

The SEMRush API also has the option to export to CSV or JSON. Since we’ve covered JSON in a fair amount of depth today, I’m going to show you the CSV export option in R, which is actually its standard operating procedure.

Due to the way the SEMRush API handles error reporting when a keyword isn’t in its database, this is also a really good opportunity to incorporate some error handling in our function. So, let’s get going.

Gathering Keyword Data From The SEMRush API In R

Authenticating with the SEMRush API in R is very similar to how we did it with SERPAPI – just adding our API key to the query string, so that’s handy.

Let’s get going.

Firstly, we want to create an object with our API key. This works in the same way as other APIs, so very simple:

semRushAPI <- "XXXXXXXXX"

Replace the X’s with your own API key again and don’t forget the speech marks.

Now we want to start building our function and gathering our data. Here’s how.

Gathering Keyword Data From The SEMRush API In R

Our function isn’t too different to our others, but we’ve got a new data source in there, which is read.csv compared to our usual fromjson command. You’ll also notice that we’re using a different separator than usual and we’re also going to use a different command to run it.

Let’s take a look at our function, and then we’ll break it down.

semRushKeywordData <- function(x, y){

apiCall <- paste("https://api.semrush.com/?type=phrase_this&key=", semRushAPI,

"&phrase=", x, "&export_columns=Ph,Nq,Cp,Co,Nr,Td,In&database=",

y, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

semRushData <- read.csv(apiCall, header = TRUE, sep = ";", stringsAsFactors = FALSE)

}

How The SEMRush Keyword Research Function Works

As you can see, it’s not a million miles away from our other functions, so you can see that using APIs in R follows fairly similar rules. Let’s see what goes into this one.

- semRushKeywordData <- function(x, y){: We’re creating our semRushKeywordData function and using x and y variables

- apiCall <- paste(https://api.semrush.com/?type=phrase_this&key=: As previously, we’ve got our endpoint and other elements that we want to paste together to build our API call URL. The ?type=phrase_this query says that we’re using the keywordn research function of the API

- semRushAPI, “&phrase=”, x,: We’re adding the semRushAPI key object we created earlier and our x variable for the phrase, or our keyword from our dataset

- “&export_columns=Ph,Nq,Cp,Co,Nr,Td,In: We’re looking to extract the following columns from SEMRush with this data: Keyword, Search Volume , CPC, Competition Number of Results, Trends and Intent. I told you there was a lot of information you could get from this API!

- &database=”, y, sep = “”): SEMRush has databases all over the world, so you can pull data relevant to your geographic region. The documentation has the full list, and for this function, I’ve set the database to our y variable, so you can change it based on your location. We’re finishing the paste command up with sep=”” as usual, so there are no spaces in the URL

- apiCall <- gsub(” “, “%20”, apiCall): As with our other API calls in our R environment, we’re using gsub to replace any spaces in our keywords with %20 to encode them

- semRushData <- read.csv(apiCall, header = TRUE, sep = “;”, stringsAsFactors = FALSE)}: Now to finish the function with our trusty read.csv command. You’ll notice that there are a couple of extra parameters in there for this one, namely header = TRUE (which does exactly what you’d think it would) and sep = “;” – this is there because the SEMRush API sends data separated by a semicolon rather than your traditional commas, largely because it nests trend data with commas

And there we go, a nice simple function to get SEMRush data from the API in R. Now to run it, we do the following:

semRushOutput <- do.call(rbind, lapply(keywords$keyword, semRushKeywordData, "uk"))

You’ll notice that this is slightly different to the other commands we’ve used to run our API calls before. This is because of the way the SEMRush API sends out CSV data, the usual “reduce” command tends to give an error. By using do.call(rbind we’re essentially doing the same thing, but it tends to work a bit better with CSV data. You may still get some warnings, but not errors.

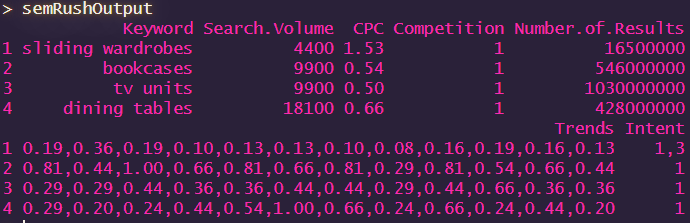

Now if you run your command on the keywords frame we created earlier, you’ll get the following:

Handy, right? And this is just the beginning of what you can do with APIs in R.

Wrapping Up

So there we have it – now you know how to use different types of APIs in R, ones with JSON and CSV outputs, and how to use them for SEO purposes.

Obviously there are millions of APIs out there, and I haven’t even scratched the surface of what’s possible, but hopefully this crash course will be enough to get you started.

As always, if you have any questions, please hit me up on Twitter/ X and if you’d like to get an alert of when the next post drops, please sign up for my email list below.

Until next time, where we’ll be looking at different ways of running functions with loops and applys. I hope you’ll join me.

Our Code From Today

# Install Packages

install.packages("jsonlite")

library(jsonlite)

install.packages("tidyverse")

library(tidyverse)

# SERPAPI

serpApiKey <- "XXXXXXXXXXXXX"

## Endpoint

serpApiEndpoint <- "https://serpapi.com/search.json?engine=google"

## TV Units Call

serpApiCall <- paste(serpApiEndpoint, "&q=tv%20units", "location=United+Kingdom&google_domain=google.co.uk",

"&api_key=", serpApiKey, sep = "")

serpAPI1 <- fromJSON(serpApiCall, simplifyDataFrame = TRUE)

#Exploring JSON

str(serpAPI1)

serpAPI1$organic_results

serpAPI1$organic_results$title

## Create Keywords Dataframe

keywords <- data.frame(keyword = c("sliding wardrobes", "bookcases", "tv units",

"dining tables"))

## Running Multiple Keywords In SERPAPI

## Domain Names Function

domainNames <- function(x){

strsplit(gsub("http://|https://|www\\.", "", x), "/")[[c(1, 1)]]

}

## SERPAPI Function

serpAPIFun <- function(x){

require(tidyverse)

require(jsonlite)

apiCall <- paste(serpApiEndpoint, "&q=", x, "&location=United+Kingdom&google_domain=google.co.uk",

"&api_key=", serpApiKey, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

serpData <- fromJSON(apiCall, simplifyDataFrame = TRUE)

serpData$organic_results$domain <- domainNames(serpData$organic_results$link)

output <- data.frame(serpData$search_parameters$q, serpData$organic_results$link,

serpData$organic_results$title, serpData$organic_results$snippet,

serpData$organic_results$domain, serpData$organic_results$position)

colnames(output) <- c("Keyword", "URL", "Title", "Description", "Domain", "Position")

return(output)

}

serpAPI2 <- reduce(lapply(keywords$keyword, serpAPIFun), bind_rows)

## SE Ranking

seRankingAPI <- "XXXXXXXXXXXXX"

seRankingDBs <- function(x){

apiCall <- paste("https://api4.seranking.com/system/search-engines?token=", x,

sep = "")

databases <- fromJSON(apiCall, simplifyDataFrame = TRUE)

output <- data.frame(databases)

}

seRankingDatabases <- seRankingDBs(seRankingAPI)

## SE Ranking Keyword Volume

seRankingKeywords <- function(x, y, z){

apiCall <- paste("https://api4.seranking.com/system/volume?region_id=", y,

"&keyword=", x, "&token=", z, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

seRankingData <- fromJSON(apiCall, simplifyDataFrame = TRUE)

seRankingData <- data.frame(x, seRankingData)

colnames(seRankingData) <- c("Keyword", "Search Volume")

output <- seRankingData

}

seRankingRun <- reduce(lapply(keywords$keyword, seRankingKeywords, "180", seRankingAPI),

bind_rows)

# SEMRush

semRushAPI <- "XXXXXXXXXX"

semRushKeywordData <- function(x, y){

apiCall <- paste("https://api.semrush.com/?type=phrase_this&key=", semRushAPI,

"&phrase=", x, "&export_columns=Ph,Nq,Cp,Co,Nr,Td,In&database=",

y, sep = "")

apiCall <- gsub(" ", "%20", apiCall)

semRushData <- read.csv(apiCall, header = TRUE, sep = ";", stringsAsFactors = FALSE)

}

semRushOutput <- do.call(rbind, lapply(keywords$keyword, semRushKeywordData, "uk"))